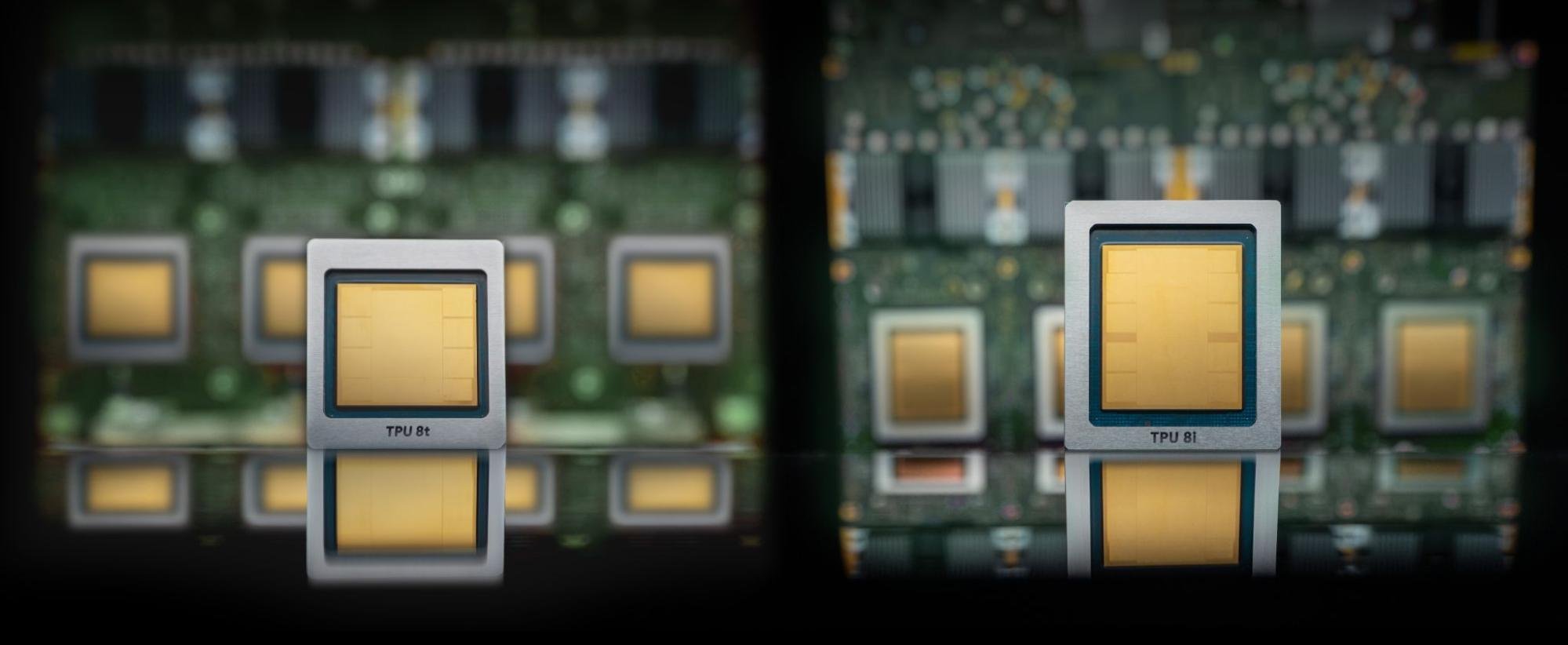

Google powers many of its AI-driven products—including Search, Photos, Maps, and Gemini—using its custom-built Tensor Processing Units (TPUs). Unlike general-purpose chips, TPUs are specialized accelerators designed specifically for machine learning workloads, ranging from large language models and AI agents to computer vision systems and recommendation engines.

Google’s next wave of TPU development appears focused on handling one of modern AI’s biggest bottlenecks: memory efficiency and inference speed. With TPU v8i, the company is expected to leverage massive Key-Value (KV) caches built directly into silicon through expanded on-chip SRAM. This architecture is designed to support the growing demands of agentic AI systems—models capable of multi-step reasoning, persistent context handling, and continuous reinforcement learning.

The result could be lower latency, reduced idle core time, and more predictable performance across advanced reasoning tasks. In practical terms, that means faster responses, more efficient processing, and stronger support for AI systems that need to think through multiple actions before delivering an answer.

This marks an important step forward for the AI industry. By reducing the operational overhead common in multipurpose chip architectures, Google is optimizing hardware for the exact needs of next-generation AI. It also increases competitive pressure on rival AI chipmakers such as NVIDIA, AMD, and other data center hardware providers.

Ultimately, Google’s continued investment in Cloud TPU infrastructure signals a broader strategy: making its cloud platform a leading destination for companies building and deploying large-scale AI systems.